Why AI rollouts stall: Checklist

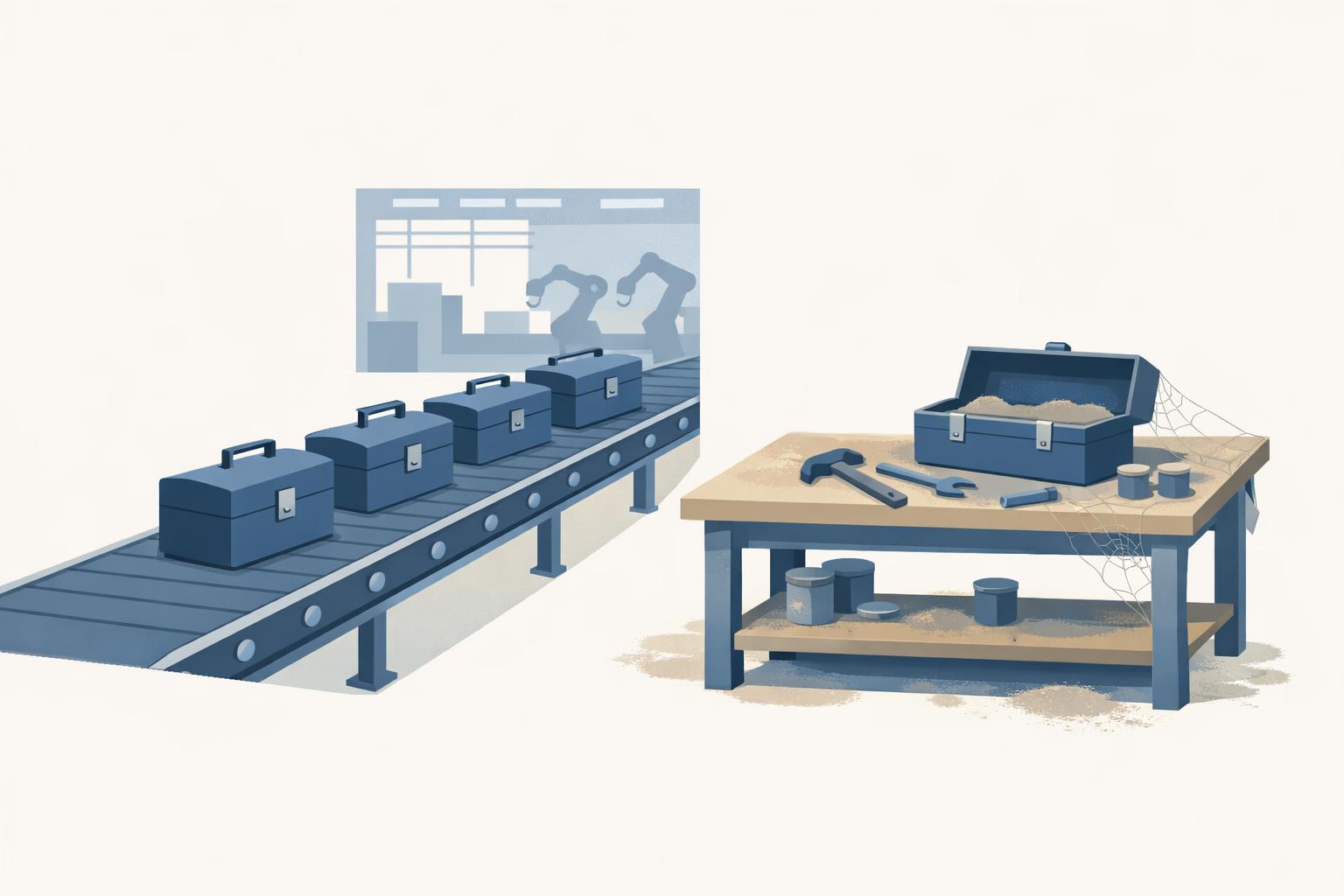

why ai rollouts stall usually comes down to tool access without workflow change, so the dashboard looks healthy while the day-to-day work stays exactly the same.

why ai rollouts stall usually comes down to tool access without workflow change, so the dashboard looks healthy while the day-to-day work stays exactly the same.

Table of contents

- TL;DR

- Why do AI rollouts stall even when usage looks high?

- Which non-obvious blockers make AI tools not being used?

- How do you tell whether workflow change with AI tools is actually happening?

- What should you do next if the rollout is stuck?

- Bottom line

- FAQ

Why AI rollouts stall: Checklist

By 2025, 88% of companies reported regular AI use in at least one business function, yet most still had not scaled it into material business impact, according to McKinsey’s State of AI 2025. AI rollouts stall when teams stop at tool access - licences issued, pilots launched, training completed - without changing the actual workflow for recurring work. If AI is not altering how briefs are written, tickets are resolved, campaigns are shipped, or reports are reviewed, the rollout is stalled even if your usage dashboard says adoption looks fine.

That pattern shows up in both US and European teams. A US software company can point to ChatGPT Enterprise seats, GitHub Copilot usage, and a full enablement week, while engineers still write specs, debug, and review code exactly as before. A DACH insurer can roll out Microsoft Copilot to claims, HR, and legal, yet still see sensitive tasks bounce back to manual review because nobody redesigned the approval path, prompt context, or output checks. People are experimenting, but the team has not moved from occasional prompting to repeatable workflow change.

This checklist will help you test that gap properly. You will learn how to spot surface-level adoption, where training is masking weak execution, and which signals actually show progress - not just logins or self-reported confidence.

TL;DR

- Define one recurring workflow per team that AI must change, then measure speed, quality, and handoffs before calling rollout success — for example, a.

- Audit manager behaviour: require leaders to show what good AI use looks like in reviews, approvals, and daily task allocation, not just in town.

- Replace self-reported adoption with evidence from actual work: compare prompts, outputs, and completed tasks against pre-rollout baselines, the way GitHub Copilot usage is tracked.

- Separate tasks into automate, assist, or leave alone, and stop forcing AI into sensitive steps that still need manual review, like legal sign-off or.

- Recheck quarterly and stop training that raises enthusiasm but does not move throughput, quality, or decision time; if the numbers do not change, the.

Why do AI rollouts stall even when usage looks high?

AI rollouts can look healthy in dashboards and still be stalled. The tell is simple: people are touching the tool, but the workstream has not moved. In practice, “high usage” often means a small group drafting emails, briefs, code comments, or meeting summaries in ChatGPT or Copilot while intake, review, approvals, handoffs, and final decisions still follow the pre-AI path. That is why the useful question is not “are people using AI?” but “which recurring tasks are now done differently because of AI?” McKinsey’s 2025 State of AI and Harvard Business Review, 2026 point to the same pattern: broad use without embedded ways of working.

This is where survey data misleads. Self-reported adoption captures intent and optimism better than behaviour inside the workflow. A team member who uses Copilot twice a week may answer “regular user,” but that tells you nothing about whether ticket triage, campaign production, month-end reporting, or legal review now runs faster or with fewer handoffs.

A quick check before you call a rollout successful: can you name the workflow that should change, not just the tool deployed? Do you have a baseline for speed, quality, or throughput from before rollout? Is there a business owner outside IT? Do managers know what good AI use looks like in support, finance, HR, or engineering? Have you separated tasks to automate, assist, or leave alone? In one Munich software team, the rollout only became real where support managers defined exactly how AI could triage and draft responses; elsewhere, “encourage experimentation” produced activity and almost no workflow change.

Which non-obvious blockers make AI tools not being used?

The hidden blockers are usually organisational permissions, not missing features. Teams stop short when managers have not clarified what good AI use looks like, what can be trusted, and who owns the risk. That is why licences, prompt libraries, and even well-attended training weeks often fail to convert into visible use on live work. BCG’s 2025 adoption research makes this point clearly: differences in motivation, confidence, empowerment, and support explain why one team moves and the next freezes.

Anxiety is part of that pattern, especially where AI changes how expertise is seen. The Harvard Business Review product summary from February 2026 points to relevance, identity, and job-security fears driving surface-level use without real commitment. In one Munich-based software company we worked with, support staff changed ticket triage quickly, but product and marketing stayed at generic drafting because nobody knew which AI outputs were acceptable in live workflows and nobody wanted to look replaceable.

The trust break often starts with data, not the model. In Gartner’s April 2026 I&O survey, 38% of leaders said poor data quality or limited data availability directly caused AI project failure. Once a team sees a few wrong answers caused by stale CRM fields, missing knowledge-base articles, or inconsistent naming, they often conclude “AI is unreliable.”

Then comes the skills trap. Deloitte’s 2026 State of AI in the Enterprise says insufficient worker skills are the biggest barrier to integrating AI into workflows, but the useful reading is narrower: this is usually a judgment gap, not a prompting gap. Teams do not need another generic session on prompts; they need practice decomposing tasks, spotting failure modes, and deciding when output is good enough to ship, escalate, or discard. Without manager-approved time to learn and clear governance on data, review, and approval, adoption stays performative.

How do you tell whether workflow change with AI tools is actually happening?

Workflow change is happening only when you can see it in the work itself, not when people say they “use AI regularly.” In practice, the cleanest test is whether AI has altered task sequence, artefacts, and decision points on a live workflow. If the tool is absent from the handoff, approval, or final output, adoption is still shallow. That distinction matters because many failed pilots looked active in demos but never changed line-manager-owned work, a pattern highlighted in Fortune’s coverage of MIT research on generative AI pilots and MIT Sloan Management Review’s field lessons on the human side of AI adoption.

-

Check the order of operations. Pick one recurring task and ask: did drafting, review, approval, or escalation move? If the team still does the old sequence and only pastes text into ChatGPT midway, nothing structural changed.

-

Check whether outputs leave the chat window. Real adoption means AI-generated material is reused in Jira tickets, CRM notes, PRDs, legal redlines, or customer replies. If outputs stay in a side chat and never enter system-of-record artefacts, you are measuring experimentation, not workflow change. That is why engineering teams usually need delivery metrics such as cycle time, PR throughput, and task completion rate, while non-technical teams need equivalent artefact-level checks.

-

Ask managers for one before/after example. Not a sentiment score. One concrete case: “quotes approved same day instead of next day,” or “ticket classification no longer waits for senior review.” If no manager can produce that example, the rollout has not crossed into operational change. McKinsey’s 2023 State of AI found inaccuracy was cited more often than cybersecurity or compliance as a gen AI risk, which is why teams that do change workflow usually add verification steps rather than remove judgment.

-

Look for patterns, not heroes. In one 240-person Munich software company we assessed, the only durable shift was inside support: AI changed triage and reply drafting there, while product and marketing stayed at generic drafting. Champions usually emerge from teams already changing live work, not from the people most willing to attend AI week.

What should you do next if the rollout is stuck?

Once you’ve identified the failure mode, pick the intervention that fits it. That usually means targeted workshops, champion activation, or a 3-6 month roadmap - not another generic training wave.

-

If access is high but workflow change is low, freeze licence expansion and fix one live process. Don’t buy another seat of ChatGPT Enterprise or Copilot until one critical workflow has an approved AI path through drafting, review, handoff, and sign-off. In DACH teams, this is often where governance debt shows up late: works council, BDSG, or AI Act questions arrive after procurement, managers get nervous, and usage stays stuck at harmless drafting. Define three things before scaling: what is allowed, what requires human review, and who owns approval on live work.

-

If usage is uneven, build around the pockets already above baseline. The strongest champions are rarely on the steering committee; they sit in support, ops, or a product sub-team and have already figured out repeatable patterns. One mid-market software company we worked with had exactly that: after an AI week, most teams stayed at generic drafting, but support had quietly changed ticket triage and response prep. Turn those people into a 6-week champion cohort, have them document prompts, review criteria, and failure cases, then let adjacent teams copy a proven method instead of attending another broad awareness session.

-

If confidence is low or your evidence is weak, stop relying on self-report. Run short observed interviews, review actual artefacts, and re-measure quarterly. Generic AI literacy sessions do little for someone who needs to judge model output in customer support, legal review, or PR review. Teach the exact task, with the team’s own documents, then check three months later whether outputs, cycle time, or handoffs changed. If you cannot show movement in the work itself, the rollout is still stuck.

Bottom line

AI rollouts stall when teams stop at tool access and never change the recurring workflow. The next move is to pick one high-volume task per team, measure speed, quality, and handoffs against a baseline, and use actual work evidence - not self-reported usage - to decide whether the rollout is real or just busy. If you can’t see that clearly from logs, outputs, and manager behaviour, that’s usually the point where outside help is useful; AI Beavers measures adoption from voice interviews and maps the gaps to the right intervention.

Your team has AI tools but adoption is shallow? We measure it and fix it. Book a diagnostic call -> calendar.app.Google or email [email protected]

Use a task-level baseline, not a seat-level one, because that is usually where why ai rollouts stall.

FAQ

How do you measure AI adoption beyond login counts?

Use a task-level baseline, not a seat-level one. Track a sample of recurring work items before and after rollout - for example, 20 briefs, 20 tickets, or 20 reports - and compare cycle time, rework rate, and approval latency. If you want a more reliable signal, add artifact review with a simple rubric in Notion, Airtable, or Google Sheets so you can score output quality consistently across teams.

What is the difference between AI usage and AI workflow change?

Usage means someone opened the tool; workflow change means the tool changed who does what, in what order, and with what quality check. A practical test is whether the team can remove a manual step without performance dropping - if not, AI is still a sidecar, not part of the process. In regulated functions, that often means redesigning approval gates and evidence capture before you expect any real throughput gain.

What are the best KPIs for AI rollout success?

The most useful KPIs are usually cycle time, first-pass quality, and handoff count, because they show whether work is actually moving differently. For customer-facing or content-heavy teams, you can also track revision rounds per output and time spent in review. If leadership wants a single number, use a before-and-after median on one high-volume workflow instead of a broad adoption score.