Team AI maturity tiers: The complete guide

Table of contents

- TL;DR

- What is team AI maturity tiers?

- Why do most AI maturity scores overstate reality?

- How do you identify AI adoption levels without fooling yourself?

- The AI maturity matrix which economies are ready for AI?

- Are you overestimating your responsible AI maturity?

- Bottom line

- FAQ

Team AI maturity tiers: The complete guide

In BCG’s 2024 survey of 1,000 CxOs and senior executives across 59 countries, 74% of companies said they still struggle to get tangible value from AI, and only 26% had built the capabilities to move beyond pilots and proofs of concept (BCG, 2024).

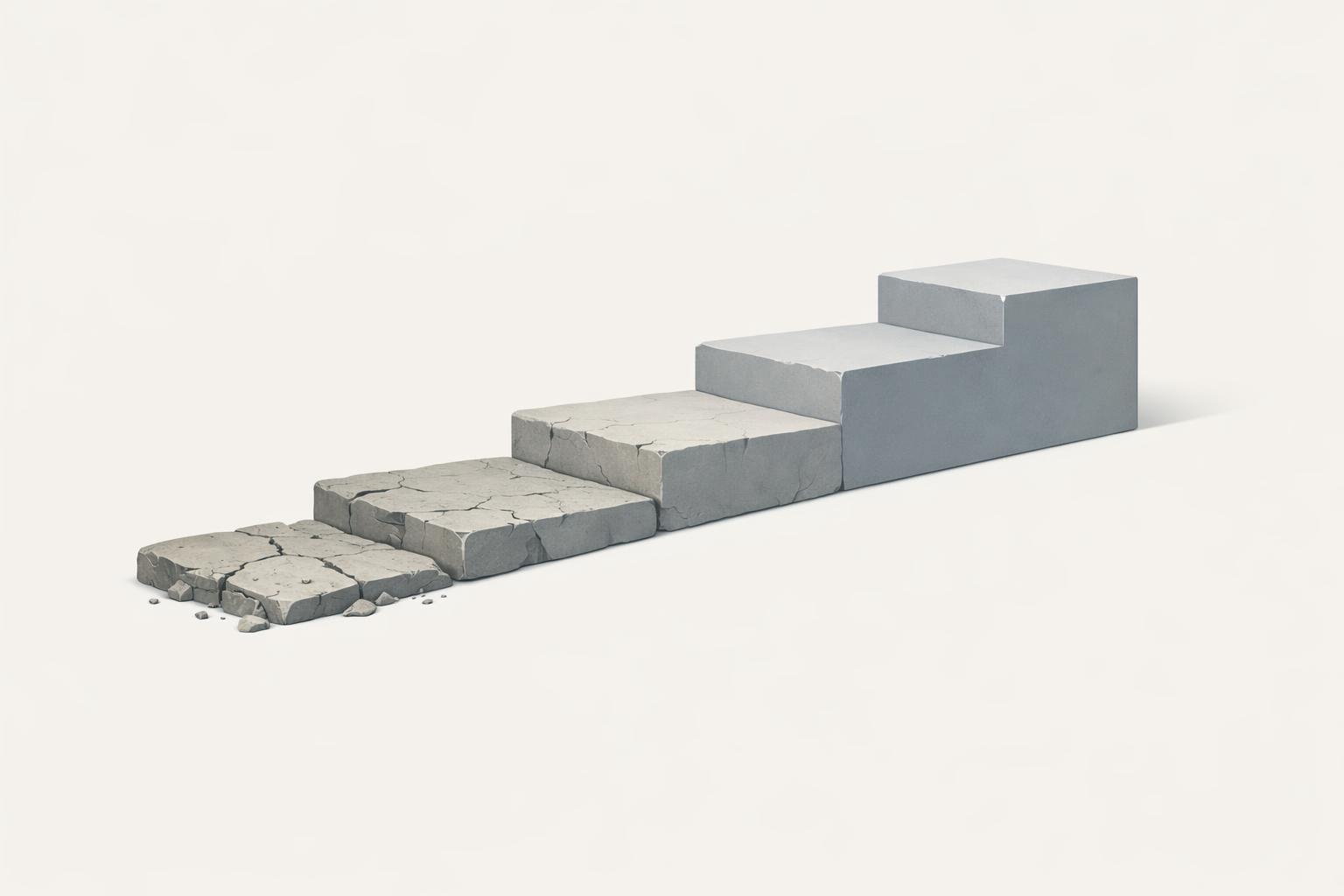

Team AI maturity tiers refers to a simple classification of how consistently a team turns AI tools into real work outputs - not whether people say they “use AI,” but whether they can apply it inside repeatable tasks, with judgment, context, and systemised workflows. In both cases, the failure mode is the same: dashboards show logins, internal surveys say adoption is “good,” but the actual work still happens the old way.

This guide will show you how to identify maturity tiers such as champion, growing, stuck, and surface; how to tell behaviour signals from self-reported confidence; and how to map each tier to the right intervention. We will also connect team-level adoption to broader maturity research - for example, Accenture reported in 2022 that more than 60% of companies were still only experimenting with AI, while just 12% had reached a maturity level associated with strong competitive advantage.

TL;DR

- Audit real workflows, not licence counts, and classify each team as surface, stuck, growing, or champion using observed outputs and task evidence.

- Replace self-assessment forms with AI voice interviews that capture how people actually use tools, where judgment breaks, and which tasks stay manual.

- Map each tier to a specific intervention: fluency training for surface teams, workflow redesign for stuck teams, and champion activation for growing teams.

- Re-measure quarterly and track before/after deltas so you can prove which workshops, playbooks, or governance changes moved adoption.

- Build internal champion networks around repeatable prompts, output standards, and teachable workflows before scaling AI to the rest of the team.

What is team AI maturity tiers?

The useful unit of analysis is not the company or the licence count. It is the team workflow. Team AI maturity tiers are a way to classify whether AI is still an optional helper, a fragile habit, or a built-in part of how work moves. Vendor maturity models already point in this direction: Gartner’s AI maturity toolkit scores operating model, culture, and value creation alongside technology, and Microsoft’s 2025 Copilot Studio maturity guidance describes progression from early experimentation to optimised operation.

In plain language, the four tiers are these. Surface means people have access and curiosity, but usage is mostly chat, search, or one-off drafting. Stuck means they use AI often enough to say they “use it daily”, but the underlying workflow is still manual, so quality and speed depend on individual effort rather than a repeatable process. Growing means a few people have working patterns others can copy, but usage is uneven and fragile. Champion means the team has repeatable prompts or playbooks, clear judgment about when outputs are good enough, and internal people who can teach others. Microsoft explicitly recommends building internal champion networks to spread working practices, not just tool awareness, in its Inside Track guidance for IT leaders.

The point of tiering is operational. Surface teams need fluency and safe experimentation. Stuck teams need workflow redesign around real tasks. Growing teams need shared standards, governance paths, and champion activation. Champion teams need measurement and optimisation. That is also the dividing line between “AI as convenience” and “AI as value creation”: Deloitte’s 2025 analysis of nearly 550 leaders found mature adopters treat AI as a revenue and value creator, not only a productivity tool.

Why do most AI maturity scores overstate reality?

Most AI maturity scores are wrong for a simple reason: they measure what people say about AI, not what changed in the work. A team can honestly report “we use Copilot every day” and still be doing the same job as before, just with faster first drafts. That is not maturity. It is tool exposure. The useful question is narrower and harder: did AI change how the team briefs tasks, decomposes work, reviews outputs, verifies facts, and hands work off to the next person? Frameworks from vendors such as Microsoft Learn’s adoption maturity model and broader scorecards like the GitHub AI Maturity Model are only as good as the evidence you feed them.

The warning sign is not theoretical. In BCG’s responsible AI research, 55% of organisations were less advanced than they believed, which is exactly what you would expect when maturity is self-scored by managers or captured through checkbox forms. McKinsey’s global AI trust survey also defines higher maturity partly by cross-team risk processes and operational controls, not just stated usage, which matters because governance maturity shows up in workflow design long before it shows up in confidence scores (McKinsey Global AI Trust Maturity Survey).

The gap shows up fast in interviews. A 42-person B2B software team we worked with had strong attendance in training and high self-reported confidence six months after rollout, but when we checked real tasks, most people were still using AI for email drafts and meeting summaries rather than changing ticket handling, spec writing, or customer follow-up. The few people who were genuinely advanced had repeatable prompts, explicit verification steps, and clear judgment about when not to trust the model. That is why evidence beats opinion: observed behaviour, reviewed artifacts, and verified outputs tell you where adoption is actually embedded, and where it is still performative.

How do you identify AI adoption levels without fooling yourself?

You identify AI adoption levels by watching people do real work, not by asking them what they think they do. The signal is in task-level evidence: prompts, outputs, edits, handoffs, and whether the same behaviour shows up again across people and roles. That gives you a score you can trust and, more importantly, a clear next intervention.

| Evidence type | What it tells you | Weight |

|---|---|---|

| Real work artefacts | Whether AI changed the output | Highest |

| Workflow proof | Whether usage is systematised | High |

| Self-description | Whether people believe they use AI | Lowest |

A practical way to avoid fooling yourself is to score six dimensions, not raw frequency. A daily user may still be weak on output judgment or task decomposition. A workable rubric is: tool fluency, context engineering, workflow systematisation, output judgment, task decomposition, and applied adoption. We often see one product or support lead score high because they verify outputs and reuse patterns, while adjacent teams with the same licence remain shallow.

Use AI-driven voice interviews to test for operational detail. In a 20-30 minute interview, people reveal whether “daily use” means robust task redesign or just drafting help. They also surface blockers they avoid in forms: uncertainty about legal review, fear of exposing weak prompts, or not knowing when an output is good enough.

Check distribution, not averages. One champion does not make the team mature. A maturity average can hide a team where two people carry the real workflow change and everyone else is still at first-draft level. That distinction matters because the action differs: champions need activation, while “stuck” users need hands-on work on judgment and decomposition, not more generic training.

The AI maturity matrix which economies are ready for AI?

The AI Maturity Matrix is useful because it shows why adoption speed differs across economies, not just across teams. Market conditions, regulation, digital infrastructure, and execution capacity all shape how fast AI moves from licence access to real workflow change. That means a German compliance team and a US product team should not be judged against the same adoption curve.

Read the matrix as context, not as a verdict on your teams. A country can score well on macro readiness and still contain plenty of teams stuck at shallow adoption. Infrastructure and policy can be strong while workflow redesign remains weak, especially in regulated functions where approval paths and data handling rules slow experimentation (BCG AI Maturity Matrix, 2024, McKinsey State of AI trust in 2026).

Separate policy readiness from workflow readiness. In DACH and wider EU teams, legal review, works council involvement, and data governance often mature faster than day-to-day usage patterns. That is not failure; it just means the bottleneck has moved. We see teams with clear guardrails but no shared method for task decomposition, verification, or handoff design, so AI stays a personal productivity aid instead of becoming a team operating step. McKinsey’s 2026 trust work points in the same direction: maturity rises when ownership and governance are explicit, but governance alone does not create execution (McKinsey State of AI trust in 2026, Gartner AI Maturity Model Toolkit).

Use a two-axis diagnosis before benchmarking across economies. Ask: is this team slow because the market context is restrictive, or because the workflow never got systematised? That is the practical takeaway: when leaders say “our market is behind,” the real issue is often that adoption is fragmented inside the company, not impossible outside it (Accenture Research, 2022, BCG AI Maturity Matrix, 2024).

Are you overestimating your responsible AI maturity?

Yes - most teams overestimate responsible AI maturity because governance matures on a different track from usage. A team can be strong at prompt design, retrieval, and workflow integration, yet still be one incident away from chaos if nobody owns approvals, exceptions, and post-release review. The practical test is not “do we have a policy?” but “who decides, how often do they review, and where does a risky case go on a Tuesday afternoon?” That’s why frameworks like the NIST AI Risk Management Framework and the EU AI Act matter in practice: they force named ownership, review cadence, and escalation paths, not just a PDF in Confluence. In practice, this is the difference between a team that has a governance doc and a team that can actually route a high-risk use case through legal, security, and product review without stalling the work. You see the same pattern in companies like Microsoft, which publishes a Responsible AI Standard with clear internal requirements for review and accountability, and in the way the EU AI Act pushes teams to document roles and controls rather than rely on informal sign-off. McKinsey’s Global AI Trust Maturity Survey points in the same direction, finding that teams with clear responsible-AI ownership - especially AI-specific governance roles or internal audit and ethics functions - show the highest average maturity levels, which is the clearest signal that operating model matters as much as model capability.

Check for named ownership, not committee fog. If legal “reviews AI”, IT “manages tools”, and HR “handles policy”, you probably have diffusion, not governance. In practice, the strongest teams assign one accountable owner per use-case family, plus a standing path into security, legal, and works council where relevant in Europe. This is especially visible in DACH teams: progress comes when business, IT, legal, and HR know exactly when they are involved, instead of forcing every use case into the same slow approval lane (McKinsey, Global AI Trust Maturity Survey, European Commission AI Act overview).

Look for cadence and escalation. A policy PDF is inert unless someone reviews incidents, prompt logs, vendor changes, and exception requests on a fixed rhythm. As of early 2026, many teams have copied “acceptable use” language into internal docs, but the failure point is still operational: no review meeting, no threshold for red-flag use cases, no route for frontline managers to escalate a borderline case. We see this repeatedly after tool rollouts: one product squad may be highly capable, while the adjacent ops team is improvising around customer data and approval boundaries with no shared playbook (NIST AI Risk Management Framework, Accenture, The Art of AI Maturity).

Fix the right bottleneck. If usage is already advanced but governance is weak, more prompt training will not solve the real problem. The intervention is ownership design: define approvers, review triggers, evidence requirements, and escalation paths by workflow. That is how responsible AI stops being paperwork and starts functioning as part of delivery.

Bottom line

The real decision is to stop measuring AI adoption by logins and self-reported confidence, and start classifying each team by what it actually produces: surface, stuck, growing, or champion. Audit real workflows, use evidence-backed interviews instead of checkbox forms, and map each tier to a specific intervention so you can see which teams need fluency training, workflow redesign, or champion activation.

If you’re mapping teams across the D1-D6 maturity tiers, the hard part isn’t the labels - it’s figuring out where tool fluency stops and workflow change actually starts. That’s where the AI voice interviews and three-level dashboard help: they show which teams are stuck at surface-level prompting, where champions already exist, and what intervention fits next, whether that’s a workshop, a champion push, or a tighter enablement roadmap.

Your team has AI tools but adoption is shallow? We measure it and fix it. Book a diagnostic call -> calendar.app.Google or email [email protected]

FAQ

How do you measure team AI maturity tiers in practice?

Use a sample of real tasks and compare the AI-assisted version against the old process, then score the gap in time, quality, and rework. A practical setup is 10-15 task samples per team plus 20-30 minute interviews with the people doing the work, so you can see where the workflow actually changes. If you want a cleaner benchmark, track the same tasks again after 90 days and look for movement in output consistency, not just usage.

What is the difference between a champion and a growing AI team?

A growing team can use AI on some tasks, but the workflow still depends on a few people and breaks when the task gets messy or high-stakes. A champion team has repeatable prompts, clear output standards, and enough judgment to use AI without constant supervision. The easiest way to tell them apart is whether the team can teach the workflow to a new joiner without the original power user in the room.

What metrics should I track for team AI maturity tiers?

Track task-level metrics such as cycle time, edit rate, first-pass acceptance, and how often AI output is reused without major rewrite. You should also measure coverage, meaning what share of a team's priority tasks are actually AI-enabled, because a high usage rate on low-value tasks can hide weak maturity. For leadership reporting, add one KPI for champion density - the number of people who can reliably coach others on the workflow.

How often should AI maturity tiers be reassessed?

Quarterly is usually the right cadence because it is long enough for new habits to settle and short enough to catch stalled adoption before it becomes normal. If you are rolling out a new model, policy, or workflow redesign, add a 30-day pulse check on the first cohort so you can spot friction early. Reassessing less often than every six months usually means you will miss whether the intervention actually changed behaviour.

What tools can help assess AI maturity tiers without using surveys?

The most useful tools are interview-based assessment platforms, workflow logs, and document version history in systems like Google Docs, Microsoft 365, Jira, or Notion. Those sources show whether AI output is being edited, approved, or discarded, which is much harder to fake than a self-rating. If you need a lightweight start, export a small set of task artefacts and compare before/after drafts from the same team. - 7 mistakes to avoid in hackathon follow through